This article talks about how to get started with the Spark shell on Windows. Based on the documentation on Spark's website it seemed like a breeze to get started. But, there were several mistakes I made which took me longer to get started than I had expected. This is what worked for me on a Windows machine:

- First, download Spark with a hadoop distribution: http://spark.apache.org/downloads.html

- Next, go to Windows=>Run and type cmd to get the DOS command prompt.

*Note: Take note that Cygwin may not work. You will have to use the DOS prompt.

- Change directory into the Spark installation directory (home directory)

- Next, at the command prompt, type

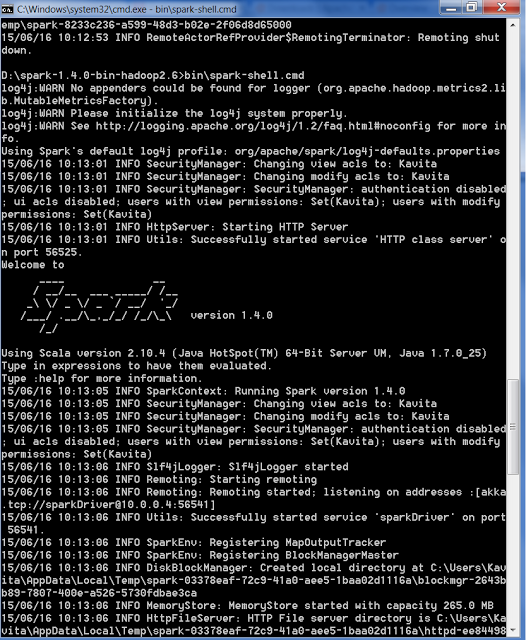

bin\spark-shell.cmd - You should see something like this:

- Once Spark has started, you will see a prompt "scala>".

- If Spark correctly initialized, if you type:

at the command prompt, you should get back:val k=5+5

If you don't then Spark did not start correctly.k: Int = 10

- Another check to do is to go to your Web browser and type http://localhost:port_that_spark_started_on. This value can be found in the start up screen. It is usually 4040, but it can be some other value if Spark had issues binding to that specific port.